Hawk AI sees itself as the “anti-money laundering” solution for financial institutions, by tracking suspicious and abnormal activity. With this new round of funding, the company looks to continue expanding its services and capabilities across a variety of industries.

Hawk AI has been expanding its product line and global presence, announcing a $17 million Series B round of investment. The funds will be used to bolster product development and international expansion plans. With an existing team of experts in machine learning, natural language understanding, A.I., and robotics, the company is confident that it has what it takes to match or beat the best competitors in this space.

Hawk AI is set out to change the paradigm of recovering illicit funds by using innovative machine learning algorithms to detect and analyze patterns in data. Using this technology, the company is able to identify suspicious activities and potential financial crimes much more efficiently than traditional law enforcement methods. This could lead to a significant increase in the recovery of illicit funds, making them more available for beneficial use by society as a whole.

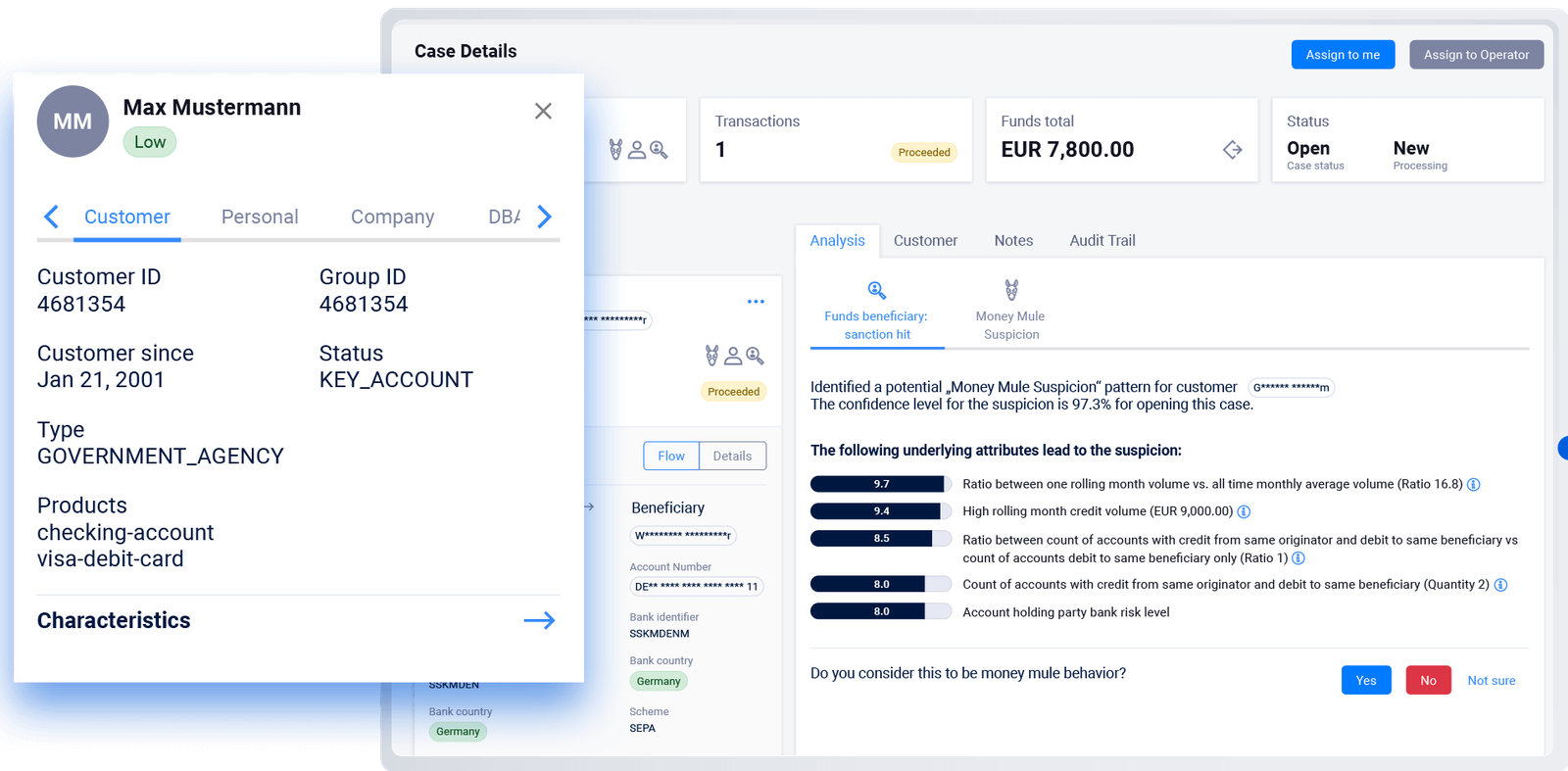

Hawk AI is a cloud-native, modular AML surveillance system that promises the “highest level of explainability” in its AI-powered decision-making engine. This is integral for audits and regulatory investigations, as the system aims to provide transparency into its findings and decisions.

There is a definite need for full explainability when it comes to artificial intelligence-driven decisions, according to Hawk AI cofounder and CEO Tobias Schweiger. Full explainability would go a long way in establishing trust and acceptance from financial institutions and regulators, who may be understandably skeptical of such automations.

Hawk AI helps banks and other financial institutions comply with AML regulations by providing transaction monitoring and explainable results. This service provides real-time alerts when any suspicious activity is detected, so bank officials can take appropriate action quickly. In addition to helping banks stay compliant, this service also improves transparency for customers and businesses

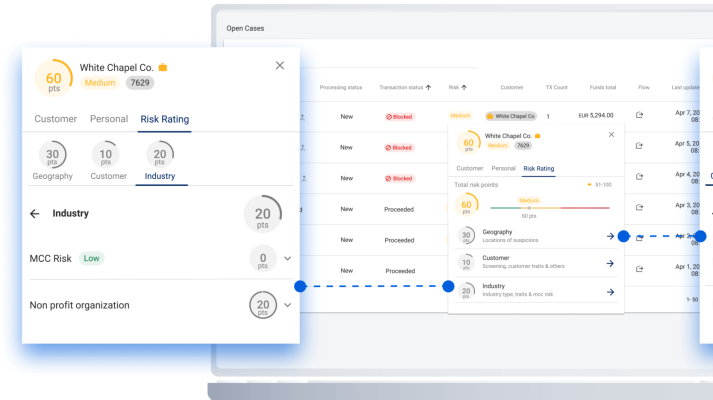

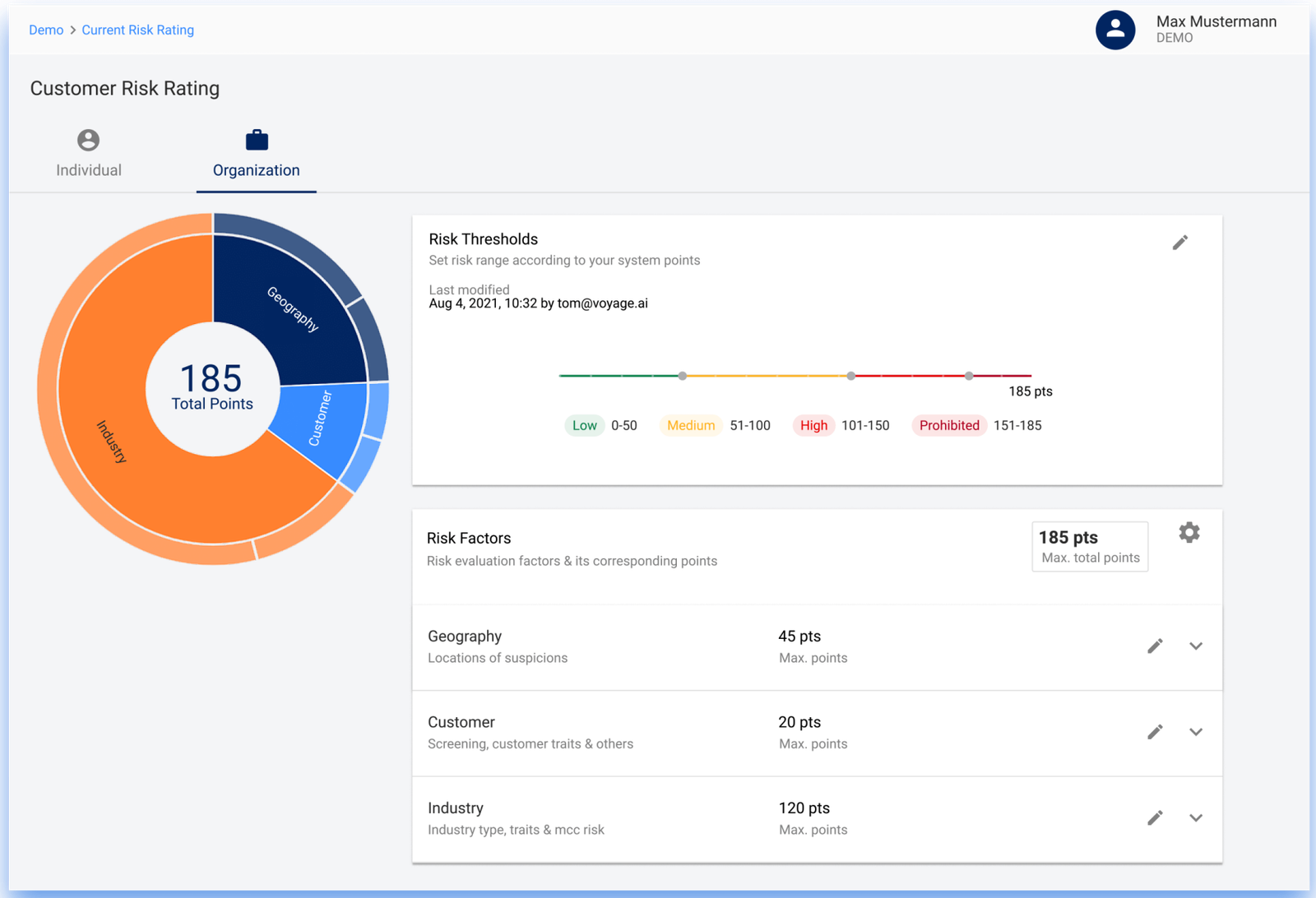

Hawk AI aims to help its customers manage the risk associated with their transactions, through the use of static data (such as product or geographical data) and dynamic data (such as transaction data). By using this information, customers can build their own risk-rating model, which allows them to identify and reduce the potential for fraud and other risky activities within their business.

According to CNBC, Bigbasket is one of India’s leading online grocery delivery services with a customer base that spans across 25 cities in India. The company fleet comprises over 1,000 bikes and employs around 5,000 people. In March 2016, the company announced plans to enter the Southeast Asian market by expanding its operations into Thailand and Vietnam.

Black box

With its cloud-native credentials and SaaS business model, Hawk AI is poised to dominate the global predictive intelligence market.

The company is keen to stress its focus on addressing the “black box” world that AI and machine learning algorithms typically inhabit — understanding why an algorithm made a specific decision is key, and companies need to be able to justify why one customer was flagged as a potential fraudster. Beneath that surface-level understanding, however, there still remains the question of where these algorithms get their data from in the first place. Some experts fear that this information vacuum could be exploited by hackers or other malicious actors who can feed false information into these systems in order to skew results or take advantage of vulnerable people.

Hawk AI features some of the most progressive customer risk rating technology on the market. By understanding your customers’ spending patterns, Hawk can help identify which ones are high-risk, and provide sensible advice to keep them safe.

Hawk AI claims that its technology is unique in providing both an insight into the factors that led to a flag and an estimation of the expected range of normal behavior, which is said to be essential for determining whether a case qualifies as suspicious activity.

In order for AI to be able to make decisions that benefit the individual, it must have a clear justification for each decision. This can be difficult to do when the algorithms used in AI are developed in secret. Compliance officers need transparency over how these algorithms are developed in order to ensure that individuals benefits from their use.